ERC Advanced Grant 2017

3D TeraHErtz & Spectrometry Manuscript Analysis

A new method to study ancient and modern manuscripts

3D T H E S M A PROJECT

SECTION A. OBJECTIVES AND STATE OF THE ART

A.1. “3D Thesma project” objectives

A.2. The use of advanced digital techniques on artistic artefacts

A.3. The development and potentialities of the philological disciplines

A.4. A new method to study manuscripts

SECTION A. OBJECTIVES AND STATE OF THE ART

A.1. “3D THESMA PROJECT” OBJECTIVES

With the 3D THESMA PROJECT prototype, it will be possible to:

A.2. THE USE OF ADVANCED DIGITAL TECHNIQUES ON ARTISTIC ARTEFACTS

From a new, multidisciplinary perspective, manuscripts do not have only two dimensions (“X” and “Y”); they also have a third “Z” dimension, the crucial time addendum, which is no longer to be considered immaterial, or scientifically unverifiable. On the contrary, it is material and can be measured using technical instruments. How can this be achieved? A fruitful combination of photonics and philology has been proven to be the way to analyse the third “Z” dimension, through a thorough analysis of the series of corrections applied to a manuscript over the course of its life, either by the author himself/herself, from the first draft to his/her “last will”, or by someone else who has handled the text: copyists, glossators and annotators, in addition, in the Gutenberg era, to editors, publishers, censors or revisers.

Photonics allows us to bring to light new data about the support, inks and stratigraphy of the corrections, while philology, especially the Italian ‘authorial philology’ method (www.filologiadautore.it), provides the theoretical perspective to interpret and represent the corrections and annotations in open access Digital Critical Editions. The latter,

In recent years, advanced methodologies (infrared, spectrophotometry, XRF, etc.) have been applied to artistic artefacts (paintings, sculptures, archaeological findings), and, in some cases, forensic analyses (from Multispectral Miniaturizing Microscope to Conoscopic Olography). However, they have only been used separately and have never been applied to ancient or modern parchments and papers manuscripts, combining three main methodologies of detection: 3D scanning, Terahertz and Spectrometry. As can be seen in the Principal Investigator (PI)’s multidisciplinary digital philology projects (here), the first results of this innovative multidisciplinary use of photonics are promising and need to be extended. This would make it possible to carry out a thorough study of the materials and inks without directly interfering with the object or damaging it in any way, offering a solution to various philological case studies that remain unsolved, and providing scholars with a new method to study manuscripts, leading to a radical revival of studies in this field.

The theoretical framework for the representation and study of manuscript variants is provided by the Italian philological school of authorial philology (www.filologiadautore.it ), which is at the forefront of this field, thanks to studies by Gianfranco Contini and Dante Isella. Since 1927 (with the birth of authorial philology, to be seen here), their philological school has undertaken a study of variants and corrections, according to the idea that the “value” of a text does not lie only in its final shape (the “last will” of the author), but rather in all the phases the manuscript has gone through: an “approximation to its value” (Contini 1937).

Although, since the arrival of the digital age, manuscripts have all been reproduced and preserved in a digital format (.giff, .tiff, .jpg), the high definition and improved quality of the graphic results do not provide an optimal deciphering of the “Z” crucial dimension, the time addendum represented by the emending stratigraphy. Nor, in particularly complex cases, do they make it possible to identify different types of stratigraphy: either on a single paper support, or on several paper supports. Let us examine the difference:

Some of the most significant case studies in the 3D THESMA Project – from Terence’s “Codice Bembino” to Petrarch’s “Codice degli Abbozzi”, Livy’s Harleian with Petrarch and Valla’s annotations, Ariosto‘s autograph Fragments, Manzoni’s and Leopardi‘s manuscripts of the Bethroted and the Canti, Carlo Emilio Gadda’s WWI notes and Montale’s Diario Postumo – cover the entirety of Western literature. Over the years, palaeographers and philologists have endeavoured to decipher the number or diachronic dimension of the different handwritings of these texts, be it by the author himself or others, including of an apocryphal nature, or by subsequent editors that have modified or corrected the text. Other manuscripts have come up against material obstacles such as deterioration over time, the fading of the surface inks, dense erasures that have made it impossible to read the text underneath, or the impossibility of opening the manuscript without damaging it irremediably. How can we read under erasures, or distinguish between the different handwritings and inks? How can we discover writings that have deteriorated over time? How can we interpret texts concealed by sheets of paper that cannot be unstuck or writing between pages stuck together? Thanks to the association of hard and human sciences, and the technological application of photonics to the cultural heritage of the Western tradition, we can now offer a response to these questions.

A.3. DEVELOPMENT AND POTENTIALITIES OF THE PHILOLOGICAL DISCIPLINES

A thorough study of manuscripts carried out using 3D imaging representation and a Terahertz and Spectrometric analysis has already proved fruitful, as can be seen from the two years of research carried out by the Sapienza Multidisciplinary Project 2014-2016). This has given rise to major advances in all fields involving the study of manuscripts, specifically in classical, medieval and modern philology. The specific case studies of the 3D THESMA Project will cover a range of different cases and issues, each with specific problems connected with the relationship between the author and his/her manuscript, the way in which the text has been corrected, or the creative thinking applied to the white paper, since we can consider the manuscript as a “fingerprint” of creative thinking. The perspectives of the discipline and the different cases and issues concerned will be analysed individually below.

A.3.1. Classical Philology. Manuscripts by authors from Greek and Latin antiquity constitute documents of extraordinary value, both because they allow for the preservation of classical texts through to the modern era, and as an invaluable record of their history: in the pages of the manuscript, text by ancient authors is often accompanied by a plethora of corrections, notes, glosses, scholia, and illustrations. These graphic layers have built up over the centuries, and provide documentary evidence of a privileged history of reception and exegesis, sometimes dating all the way back to late antiquity, as is the case for certain manuscripts by Virgil and Cicero (edited in the 4th-5th centuries A.D), the ‘Pluteano’ by Livio (5th century) and the ‘Bembino’ by Terenzio (4th-5th centuries A.D.), which will be the case study of this section.

The codex Vaticanus Latinus 3226,5 better known as Bembinus (owned by Pietro Bembo, hence the name), which was written in the 4th/5th centuries A.D., is an exceptional document, being one of the few ancient Latin manuscripts to have reached the modern age. It presents the comedies of Terence, and, with its rich history, testifies to the persistence of this author in the Western canon. Given its significance, the Bembinus has attracted the interest of many readers and scholars, including the ancient corrector Ioviales, a number of medieval commentators, and the famous humanist Angelo Poliziano. It displays a plethora of different handwritings, whose number, activity, and chronology are much debated: the three hands identified by Umpfenbach (1870), and dating back to the 5th, 10th and 15th c., conflict with the seven correctors reconstructed by Prete (1950), to which one should also add the hands of two scholiasts. The identification of the stratigraphies, which previous studies, both paleographic and radioscopic, were unable to achieve, is an important desideratum, both for the editing and criticism of Terence’s text, and for the history of its reception from antiquity to the Renaissance. Reconstructing this history is no easy task: in the manuscripts, the various writing stages are blurred, muddled up, and even the most expert scholar will encounter some difficulty in distinguishing one handwriting from another, attributing a precise date to a script and graphic style, and reconstructing the connections and points of contact between the texts. By using the technologies presented herein, significant results could therefore be achieved in the identification of stratigraphies, which is fundamental for reconstructing the history of classical texts.

A.3.2. Medieval Philology. The situation is no different for medieval manuscripts, which provide documentary evidence of the heritage of Western European culture in the varied phenomenology of Romance literatures. In this case, too, manuscript texts have been overlaid with various editions, comments, glosses and corrections, in addition to cases of illuminated texts, created either at the same time as or subsequent to the literary texts themselves. Italian literature boasts a particular abundance of manuscript documents, from the early 14th century with Petrarch’s ‘Codice degli Abbozzi’ (Vatican Library VL 3196), which documents the first edition of the Canzoniere, to Boccaccio’s “Hamiltoniano”, which preserves the final edition of the Decameron. In particular, we will examine the case study of Petrarch’s Codice degli abbozzi, which is an exceptional document that records the emending variants with which the poet constructed the definitive text, contained in manuscript V.L. 3195. The variants, which were first studied by Wilkins (1951), who concluded that the Canzoniere had passed through nine different stages, were then reduced to four (Paolino 2000), the most important of which are the “Chigi form” and the “Correggio form”. The study of Petrarch’s variants, developed by Gianfranco Contini, is one of the most important chapters of variant criticism. A scientific study of emending stratigraphy could lead to a precise identification of the forms of the text, and the resolution of one of the most important cases of European philology – thanks to the exemplary value of the Canzoniere both during the 16th century and later – which remains unsolved to this day.

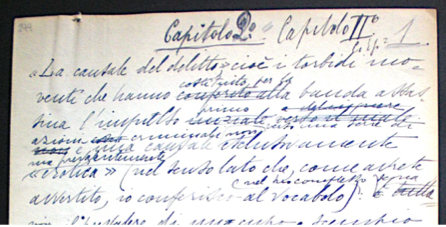

A.3.3. Humanistic Philology. While it was in the 14th century, thanks to Petrarch and Boccaccio, that autography became an integral, not to say fundamental, element of authorship, the 15th century witnessed an erosion of the borders of autography almost to the point of their disappearance, as a result of the often intimate collaboration between authors and the copyists working under their direction. In this case, too, without identifying the handwritings, it would be impossible to get to the heart of the textual traditions, which would remain a confused, indistinct jumble. When submitted to a technical analysis capable of providing scientific results, this form of research clearly also proves extremely fruitful: it is not possible to draw up an intellectual history of the 14th century without taking the writing into account, i.e. without truly delving into the thick of the humanistic writings. From this point of view, an analysis such as the one proposed here would make it possible to remove some recent doubts about Petrarch’s contribution to Livio’s corrections on the margins of the manuscript in fact, he not only signs his notes with “LV”, but also attributes to Petrarch many notes of reading, correction and collation, some of which may not in fact be by him (Berté-Petoletti 2017). As a result of the humanists’ particular way of working – they returned to their texts and ancient sources endlessly, and adopted the practice of erasures and superimposed layers just as much, if not more, than juxtapositions – a manuscript analysis method that makes it possible to rediscover emending deposits that can no longer be studied with the naked eye or using traditional instruments will ipso facto result in the recovery of entire areas of humanistic culture previously concealed under the surface of the manuscripts. This is not to mention the actual recovery of the documents, in the many cases in which the fading or acidity of the ink has resulted in the physical loss of pages central to our cultural history. A.3.4. Renaissance Philology. Given the centrality of the linguistic question, the study of the “Z” dimension, that is to say the variants in manuscript materials from the 15th and 16th centuries, is directly connected with issues of linguistic codification. One need merely call to mind, for instance, the exemplary nature of the philological problem of Castiglione’s Cortegiano, and of the revisions made for the purpose of linguistic normalisation, not to mention the autograph fragments of Ariosto’s Orlando Furioso (Ferrara, Biblioteca Ariostea). The latter constituted the very first historical testing bench for authorial philology and a source of study for the birth of the critical study of variants with Gianfranco Contini’s celebrated essay Come lavorava l’Ariosto (How Ariosto Worked) in 1937, which will form the basis of the 16th-century case study under examination. The Debenedetti edition, which was recently republished in anastatic form, shows the limits of a textual analysis without recourse to high-definition digital reproduction. Given the numerous textual lacunae, the areas that cannot be reliably deciphered, as well, most importantly, as the lack of order in the various emending series, the possibility of applying an in-depth analysis to Ariosto’s manuscript is highly attractive, offering new objective data capable of re-opening the issue of textual corrections. Along with the reconstruction of the various series of correction, the application of new manuscript research technologies will help us to understand the birth of Ariosto’s great poem and the process of creation of the chivalrous epic.

A.3.5. Modern philology. In the 17th and 18th centuries, a wider availability of paper lead to a significant increase in the number of manuscripts. What is more, the new status accorded to men of letters made it possible to preserve authorial archives, with a greater preservation of the materials used to prepare the texts before they were sent to print. The man of letters would also individualise his status through the act of writing, marking his authorship on the page and returning to his works in a process of continuous improvement and authorial rewritings, resulting in successive and differing editions and drafts of the manuscript text. Once again, the intrinsic connection with the question of language, which held a central position throughout the 19th century, means that a study of the emending layers does not only provide information relating to the genesis and evolution of the writing and the themes of the text, but also presents a historic diagram of individual linguistic uses and the interrelation between them, as well as of the general evolution of the national literary language. The case study of Manzoni’s The Betrothed (Milano, Biblioteca Braidense) brings this aspect to the fore in both literary and linguistic terms, given the interconnection between the issues related to the establishment of a national language and experimenting with a new genre, the novel. The various editions of I Promessi Sposi – from the rough draft of Fermo e Lucia, to the corrections to the manuscript for the first edition of the novel and those added by Manzoni to the copy for censorship – provide an exemplary documentary record of this process, which is at once literary and linguistic. A scientific analysis of the emending series of I Promessi Sposi would make it possible both to resolve the problems connected with the dating of the text, which remain unsolved even after the 2006 critical edition, and to attribute the so-called “dubious variants” either to the first draft of the text (Fermo e Lucia) or to the second (Sposi Promessi). Even in the birth of modern poetry, which is both marked out and revived in tradition by Leopardi’s Canti (Naples, Biblioteca Vittorio Emanuele III), there is an intrinsic connection between the history of the emending stratigraphies of manuscripts and poetry’s unique engagement with form, with the continuous coming into being of the poetry on the author’s pages. The surprises that Leopardi’s manuscripts might hold in store, if examined using this new analysis technology, relate not only to the variants of the Canti, but also to the notes the author placed in the margins of the text, which sometimes record the various readings by which the verses were inspired, and the author’s stylistic and linguistic considerations. Recently translated into English, the original manuscript of the Zibaldone might also reserve some important discoveries, where subsequent additions to the first draft often significantly modify the development of Leopardi’s thought. Not by chance, the examples of these major 19th-century authors establish a method for the study and representation of manuscript corrections, which has led to the foundation of Authorial Philology as a modern discipline for the study of authorial corrections (see the PI’s Wiki Open Access Editions of Leopardi’s work, available at: www.filologiadautore.it).

A.3.6. Twentieth-century philology. The dynamics of the 19th century took on a radical form in the 20th century, with an exponential increase in both the quantity of materials preserved by authors and available for study, and the importance of their variants. This was provoked, on the one hand, by the fragmented nature of the early 20th century and the crisis of the novel, and, on the other, by the poetics of modernism, which led to the arrival in Italy, from France, of the theorisation of the un-finished, a poetry dedicated to the process rather than the result, and whose point of arrival is always in progress. The study of variants and authorial handwritings is therefore an indispensable process carried out in parallel with the interpretation of the text itself. It is no longer conceived of as a datum but rather, thanks to the contribution of Gianfranco Contini’s critical study of variants, as a continuous “value approximation”, presented as a means of providing a scientific basis for the study of numerous cases of apocryphy both in Italian literature and in 20th-century European history, where the scientific analysis of manuscripts might resolve numerous cases of fakes, from Montale’s Diario postumo (Posthumous Diary), which is accused of being apocryphal, to the forged Diaries of Hitler and Mussolini. The scientific analysis of 20th-century manuscripts could also have a major impact on our knowledge about the connection between politics and literature, as illustrated by the PI’s recent critical edition of Gadda’s Eros e Priapo. A particularly abundant source of stratigraphies and sheets of paper or pages stuck together can be found in the papers of Carlo Emilio Gadda, an author who was in the habit of returning to his writings incessantly, adding variants, corrections and revisions, and many of whose texts remain unpublished. The papers left to Alessandro Bonsanti, and now held in Florence at the Archivio Contemporaneo of Gabinetto G.P. Vieusseux, were badly damaged by the 1966 flood in Florence. Although they were recovered in 2003, the flood damage has rendered many of the pages illegible. This is the case for one of the notebooks of the Giornale di Guerra e di Prigionia, Gadda’s WWI Notes (the case study of the Sapienza Project, 2014-2016), which has remained partly undiscovered to this day because it was completely washed out and deposited at the ‘Fondo Gadda’ in Florence. However, the Terahertz analysis technology could offer up numerous other discoveries, making it possible to examine texts concealed by sheets of paper in Gadda’s Cognizione del dolore and Adalgisa (at the Biblioteca Trivulziana, Milan).

A.4. A NEW METHOD TO STUDY MANUSCRIPTS

The aim of using the most advanced techniques of photonics to analyse matter and inks with an integrated system 3D + Thz & Spectrometry: 3D T H E S M A Project is to develop a new method to study manuscripts, analyse their “Z” dimension and give materiality to the third “time” dimension, without damaging their support thanks to the safety of the THz ray, which has already been already applied in the biomedical field. This will enable scholars to:

- emphasise the differences between subsequent corrections, giving visibility to stratigraphy (marking different layers or corrections with different colours), and bringing to light hidden text covered by erasures or successive corrections;

- discover texts hidden by cartouches, or between one page and another, which are stuck together and impossible to remove.

The resolution of the above-mentioned cases of studies, which concern some of the masterpieces of European literature ranging from the classical to the contemporary period, will also demonstrate how this new method can be used to develop a new framework to preserve manuscripts. For, with the digital age, such manuscripts have become more and more an archaeological find, requiring a different approach and different storage methods, moving beyond the usual two-dimensional approach (.giff, .tiff and .jpg) towards the 3D dimension (.obj, .lvs, .3ds). With 3D scanning, it will be possible to change the standard of digital reproduction and disseminate this not only to scholars (to resolve other unsolved case studies), but also to the civil community, who will thereby be able to appreciate the brilliance of 3D reproduction of the greatest manuscripts of the past, also through virtual restoring, raising awareness of Europe’s cultural heritage.

A.4.1. 3D Scanning system

Advanced technologies for the study, documentation and enhancement of cultural heritage are becoming increasingly widespread, and have been applied in cultural, artistic, archaeological, and juridical and forensic fields. They have not yet, however, been extended to the philological study of manuscripts, despite the fact that their third dimension, the “Z” dimension, can only be enhanced through 3D scanning. The FrameLab laboratory of the University of Bologna, partner of the 3D THESMA project, is at the forefront of the digital restoration of texts (manuscripts, papyruses and epigraphs) and has already tested 3D schedules/applications (PALAMEDES project, which visualises the two scriptiones inferiores and superiores). The laboratory will thus be able to apply these technologies to the study of the above-mentioned philological cases and develop a 3D standard to be used for manuscripts of particular value both for study and conservation purposes. Using the technologies developed in the forensic field, with the methodology of the Laser 3D interferometer (Conoscopic Ologram), the overlapping of subsequent graphs, which cannot be evaluated in sequence to the naked eye nor with normal photonic techniques (Wood lamp, UV, IR ray), will become evident. Measured graphs are scanned with a precision (for “Z” axis dimensions) of a micrometre (submicron), and from the evaluation in the assonometry of the grooves generated by graphic sign and surface alterations of the paper (where the grooves are present), it will be possible to evaluate the chronology of corrections.

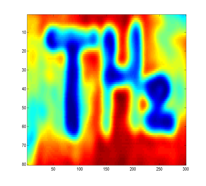

A.4.2. A double technology: Terahertz & Spectrometry

Terahertz waves are electromagnetic waves of a much higher frequency than the microwaves used in radars and mobile phones (Gigahertz), but much lower than thermal infrared radiation (tens of Terahertz). Terahertz waves have attracted considerable interest in the last decade because they display a capacity to penetrate opaque materials similar to microwaves, but allow for imaging at diffraction-limited resolutions of less than a millimetre (comparable to their wavelength in vacuo). Moreover, they are not harmful for the operator, unlike X-rays and lasers, and can nowadays be generated by portable compact devices, derived from space technology developed by NASA, ESA, and related companies, and recently delivered to the market. The recent development of lasers and video cameras operating with a wavelength of 100 microns to 1 mm – the so-called Terahertz range – provides a penetration depth of several centimetres, with a millimetric resolution. This has already been adopted in non-destructive tests on materials, security checks, and biomedical diagnostics. The research objective is to implement a Terahertz detection system to detect handwritten symbols through thick layers of paper, and thereby demonstrate that a manuscript can in fact be “read through the cover”, minimising the risk of damage to the documents. The new Terahertz technique will be combined with visible light video cameras and cutting-edge yet tried and tested spectrometric technologies, which will provide digital images of the text of the highest definition. The multi-spectral acquisition system improves the readability of texts, allowing for the extraction of hidden information through computer-aided aligning, comparing and enhancing of different spectral images. For example, faded ink will leave a spectrally resolved signature that stands out both in reflectography and fluorescence images. Spectral imaging is routinely implemented in numerous scientific and applicative fields, such as sensing and medical diagnosis.

A.4.3. An integrated system: 3D digitalising + Terahertz and Spectrometry Analysis. The project objective is to implement the Terahertz detection system, which was developed during the two-year Sapienza Research Grant (2014-2016). A first prototype of the new Terahertz technique, combined with visible light video cameras and cutting-edge yet tried and tested spectrometric technologies, was presented at ENEA-Frascati (Roma), the ThZ-Arte International Workshop in 2014, chosen from seventeen Sapienza projects exhibited at the Maker Faire 2015, and displayed during the international conference ECD/DCE edizioni a confronto-comparing editions in Rome, on 27 March 2015 (open access proceedings here), which was edited by the PI and in Paris, on 23 November 2015 (Université Sorbonne Nouvelle Paris 3 –Séminaire “Critique génétique et philologie d’auteur”, here). The aim of the ERC-AD project is to implement this prototype, and to combine ThZ and Spectrometric technology with a 3D laser scanner. Though this has recently been used for court expert detection purposes, it has never been applied to literary manuscripts. This new technology will provide extraordinary 3D digital images of the highest definition in “XYZ” (500 nm1), which could be used not only to solve the above-mentioned philological case studies, but also to define the theoretical framework for a new standard of 3D digitisation that will soon become essential to study and store particularly precious manuscripts.